Abstract

Standard alignment methods optimize for a fixed snapshot of human values — the preferences expressed by today's annotators. But human values change. What was morally acceptable in the 13th century often is not today, and our current moral consensus will likely be revised by future generations. An AI system aligned to the moral snapshot of 2024 may be as wrong about 2124 as a medieval scholar would be about us. We introduce ProgressGym, a temporal alignment framework that treats moral progress as a learning problem.

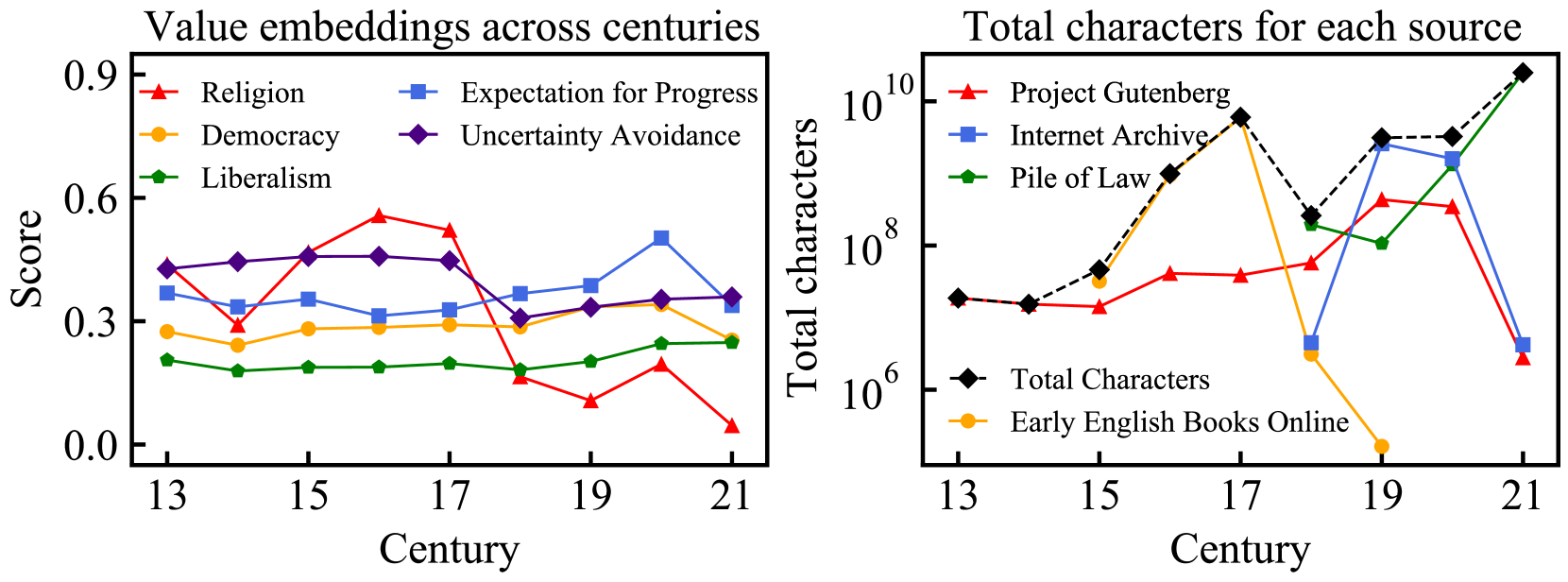

ProgressGym provides a benchmark spanning nine centuries of historical text (1200–2000 CE) and 18 historical language models trained on temporally stratified corpora, enabling evaluation of alignment methods on their ability to track and anticipate moral progress across time rather than match a fixed target. We introduce follow-the-progress (FTP) as a baseline alignment objective, and evaluate several approaches on their ability to generalize from observed moral trajectories to held-out future periods. Our results show that standard RLHF methods fail to track moral progress and can entrench historical biases, while trajectory-aware methods improve generalization. ProgressGym provides infrastructure for studying how AI alignment can remain robust as human values continue to evolve.

Cite

@inproceedings{qiu2024progressgym,

title = {{ProgressGym}: Alignment with a Millennium of Moral Progress},

author = {Qiu, Tianyi and Zhang, Yiying and Huang, Xian and Li, Jasmine Xinze

and Ji, Jiaming and Yang, Yaodong},

booktitle = {Advances in Neural Information Processing Systems},

year = {2024},

note = {Spotlight},

url = {https://arxiv.org/abs/2406.20087}

}