Stay True to the Evidence: Martingale Score for Bayesian Rationality in LLM Reasoning

Abstract

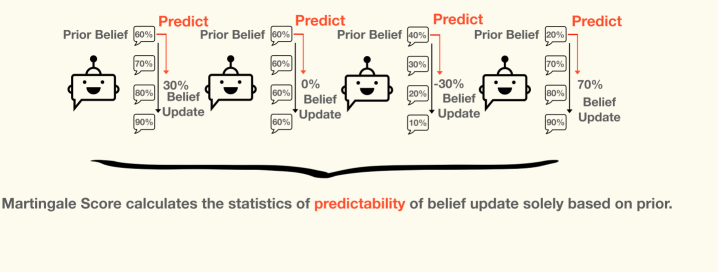

A rational reasoner should update beliefs in proportion to the evidence: each step of reasoning should move beliefs toward the truth, not away from it. Formally, beliefs should form a martingale with respect to the evidence sequence — expected future beliefs should equal current beliefs, conditional on all information so far. We introduce the Martingale Score, an unsupervised, regression-based metric that measures how far a language model's sequential reasoning deviates from this Bayesian ideal.

Applying the Martingale Score to a range of LLMs on diverse reasoning tasks, we find that iterative reasoning — chain-of-thought, self-critique, multi-turn dialogue — often deepens confirmation bias rather than advancing truth-seeking: models become more confident in their initial conclusions regardless of what the evidence warrants. The Martingale Score predicts ground-truth accuracy where labels are available without requiring them, making it applicable as an unsupervised proxy for reasoning quality. Our results suggest that more reasoning steps do not uniformly improve epistemic outcomes, and that standard benchmarks may miss systematic biases in how models integrate new information.

Cite

@inproceedings{he2025martingale,

title = {Stay True to the Evidence: Martingale Score for

Bayesian Rationality in {LLM} Reasoning},

author = {He, Zhonghao and Qiu, Tianyi and Shirado, Hirokazu and Sap, Maarten},

booktitle = {Advances in Neural Information Processing Systems},

year = {2025},

url = {https://arxiv.org/abs/2512.02914}

}